GPT4All Technology for Smart Package Robot (SPR)

Installing GPT4ALl is an easy task.

Installing GPT4All on a Windows Computer

In order to harness the capabilities of GPT4All alongside your SPR, it is essential to install it either on your local computer or within your network environment.

In this chapter, we will walk through the steps to install GPT4All on a Windows computer. GPT4All is an open-source software ecosystem that allows you to train and deploy powerful large language models on everyday hardware such as laptops, desktops, and servers. It is optimized to run inference of 7-13 billion parameter large language models on CPUs.

For more Information and also more details on how to install GPT4All on your local Computer see GPT4All Github and GPT4ALL WEB-Site.

Step 1: Install GPT4All on your local Cpmputer or in your Network

You will find an Windows-Installer for GPT4All here.

Step 2: Download Pre-Quantized Models

GPT4All uses neural network quantization to make large language models more efficient to run on consumer hardware. You need to download pre-quantized models to use with GPT4All. You can find a list of pre-quantized models on the GPT4All website or in the download pane of the chat client in left side Menu under "Downloads".

Step 3: How to start GPT4All and how to set it up

Now that you have everything set up, you can start GPT4All. Please follow the following steps:

GPT4All will setup an Icon on your Desktop, start GPT4All it with this Icon.

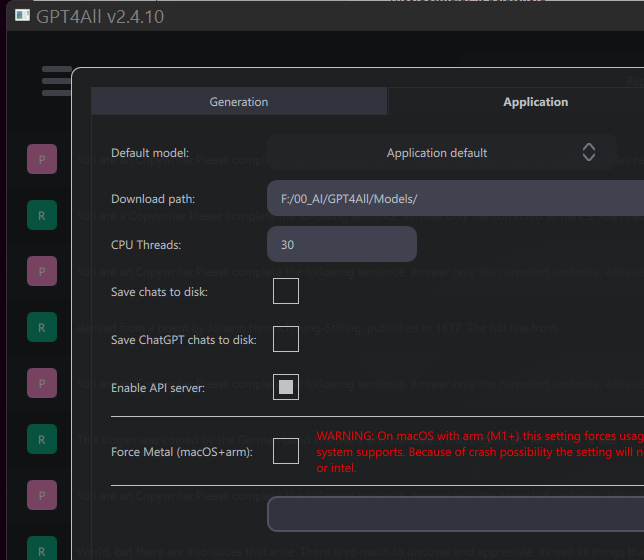

Step 4: Open Settings

Click on Settings, then you see the Settings below.

Step 5: Enable the API Server

Enable the API-Server in GPT4All, then the SPR can connect via http: to GPT4All.

You need to do this only once.

Once the Server is enabled, it will always be enabled when GPT4All is starting.

Step 6: Download Models and (if you want - optional) enter your OpenAI API-Keys

Therefore click in the Menu on the left side on "Downloads".

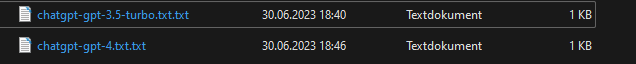

Editors Note 30.06.2023 As of today i can enter my OpenAI Key in GPT4All, but it is not displayed, as expected from the other Models under "Downloads".

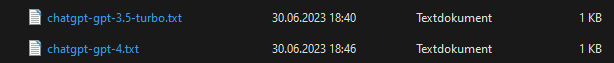

Looking into the "Models Folder" you can see that the Key is saved anyway.

The System generated the following 2 files in your Models-Folder. Each of the file just contains the OpenAI-API Key in clear Text.

However, I recommend that you create these files yourself, just make a ".txt"-File with this name and paste your API-Key inside.

Then restart the GPT4All GUI and it should work.

Now if you change the filename to:

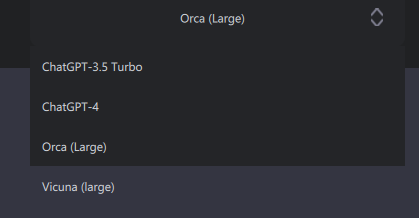

Then you will then also see these Models in the Menu:

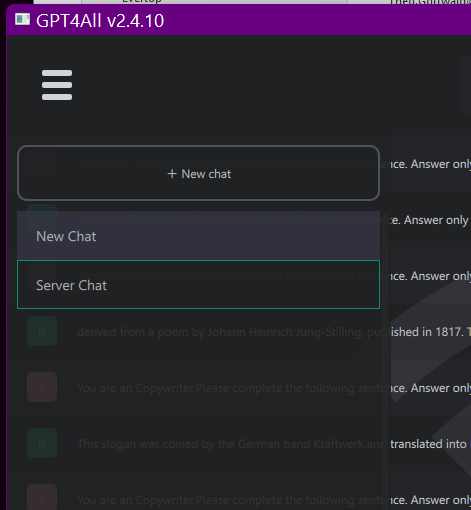

Once the Server is running, you can see it working if you press the "Server Chat" Button.

If you open the Menu on the left side, you can click on "Server Chat" and see all dialogs that are currently running over the Server.

Considerations

Keep in mind that the inference speed of a local large language model depends on the model size and the number of tokens given as input. It is not advised to prompt local models with large chunks of context, as their inference speed will heavily degrade. If you wish to utilize context windows larger than 750 tokens, you might want to run GPT4All models on a GPU (Details here).