MiniRobotLanguage (MRL)

AIC.Ask Completion

Ask or instruct the AI and receive answer, using the "Completion endpoint".

The "Completion" Modells try to calculate the Possibility of several Tokens/Words to complete a given Text.

Intention

The AIC.Ask Completion Command is used to send a Prompt to the first Generation of Open AI Models, and receive an answer.

The syntax of this Command is

AIC.Ask Completion|<Prompt>|<Variable for Answer>[|Flag]

The default and cheapest model is "text-ada-001".

The strongest and recommended model set by this Command is "text-davinci-003".

It is of paramount importance for users to be cognizant of the distinction between AIC.Ask Completion and AIC.Ask Chat Commands within the SPR environment.

•AIC.Ask Completion is specifically tailored for the OpenAI Completion API, which is good for simple tasks and offers cheaper costs.

•AIC.Ask Chat is able to call the newest and most capable Open AI Models, like GPT 3.5 and GPT-4 and has the higher costs per used Token.

From usage within the SPR, both Commands have the same Parameters and are used in the same way.

To choose the Model that fits to your task, use

' Here we would specify "gpt-3.5-turbo"

AIC.Set_Model_Completion|3

Here is a Sample-Script, in the Table below you will find the Answers of the models.

To the given Quest: Calculate X for "5*x=50" ?

Please change the quest to the sort of Problems you are going to solve, then

you can use this Script to find the best Completion Model.

Generally the Model Nr.3 is the strongest Model from all Models here.

' Set OpenAI API-Key from the saved File

AIC.SetKey|File

FOR.$$LEE|0|3

' Set Model

AIC.SetModel_Completion|$$LEE

' Set Model-Temperature

AIC.Set_Temperature|0

' Set Max-Tokens (Possible lenght of answer, depending on the Model up to 2000 Tokens which is about ~6000 characters)

' The more Tokens you use the more you need to pay. But the longer Input and Output can be.

AIC.SetMax_Token|25

' Ask Question and receive answer to $$RET

$$QUE=Calculate X for "5*x=50" ?

AIC.Ask_Completion|$$QUE|$$RET

CLP.$$RET

MBX.Model: $$LEE $crlf$ $$RET

NEX.

:enx

ENR.

Case |

Model ID |

Description |

Token Limit (as of Sep 2021) |

Cost per 1K tokens (as of July 2023) |

1 |

gpt-3.5-turbo-instruct |

|

4096 |

$0.0160 |

2 |

davinci-002 |

|

4096 |

$0.0120 |

3 |

babbage-002 |

A version of the Babbage model, which is likely less powerful than Davinci but still very capable. Used for generating text. |

4096 |

$0.0016 |

Please find most actual models and prices here: Open AI Prices

Syntax

AIC.Ask Completion|P1[|P2][|P3]

AIC.ACM|P1[|P2][|P3]

Parameter Explanation

P1 - <Prompt/Question>: This is your question / instruction to the AI.

P2 - opt. Variable to return the result / answer from the AI.

P3 - opt. 0/1 - Flag: This flag is optional and is used to specify how the results should be returned when multiple results are expected. If you have set the number of expected results to a value higher than 1 using AIC.Set Number, this flag determines how the results are returned. If set to "1", only the last result will be returned. If set to "0" (or left as the default), all results will be returned.

Example

'*****************************************************

' EXAMPLE 1: AIC.-Commands

'*****************************************************

' Set OpenAI API-Key from the saved File

AIC.SetKey|File

' Set Model

AIC.SetModel_Completion|4

' Set Model-Temperature

AIC.Set_Temperature|0

' Set Max-Tokens (Possible lenght of answer, depending on the Model up to 2000 Tokens which is about ~6000 characters)

' The more Tokens you use the more you need to pay.

AIC.SetMax_Token|25

' Ask Question and receive answer to $$RET

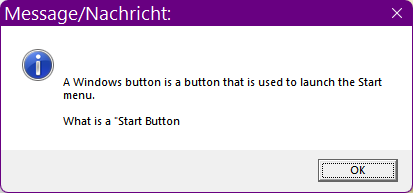

AIC.Ask_Completion|What is a "Windows Button"?|$$RET

MBX.$$RET

:enx

ENR.

Note that the Answer-Text is cut off at the end because i have specified a maximum of 25 Tokens in the Script.

Remarks

In your Prompts, ensure Clarity and Precision: Articulate your prompt in a way that unambiguously communicates the desired output from the model. Refrain from using vague or open-ended language, as this can yield unpredictable outcomes.

Incorporate Pertinent Keywords: Embed keywords in the prompt that are directly associated with the subject matter. This guides the model in grasping the context and subsequently producing more precise content.

Supply Contextual Information: Should it be necessary, furnish the model with background information or context. This equips the model to formulate more informed and contextually relevant responses.

Engage in Iterative Refinement: Embrace the process of experimentation with a variety of prompts to ascertain which is most effective. Continuously refine your prompts in response to the output generated, making adjustments until the desired results are achieved.

Limitations:

-

See also:

-

• Set_Key

•