MiniRobotLanguage (MRL)

AIC.Set_Model_Completion

Choose one of the Open AI Models that are available for the "Completion endpoint".

The "Completion" Models try to calculate the Possibility of several Tokens/Words to complete a given Text.

Intention

The AIC.Set_Model_Completion Command is used for specifying the OpenAI model you want to use for Completion-based conversations.

"Completion based" means that the Model will evaluate the answer "to complete your question" with the highest possibility.

The syntax of this Command is

AIC.Set_Model_Completion|<Modelname>, where <Modelname> is the name of the OpenAI model you want to use.

The default model set by this Command is gpt-3.5-turbo-instruct.

OpenAI offers several Completion models that can be used with the Completion endpoint, see Table below.

These models can be used in various applications, such as

•drafting emails,

•writing Python code,

•answering questions,

•creating conversational agents,

•tutoring, language translation,

•and even simulating characters for video games

•among others.

The AIC.Set_Model_Completion Command will select an Model which can then be used with the

AIC.Ask Completion-Command

Alternative to giving a Model-Name, you can specify a Model number, like this:

' Here we would specify "davinci-002"

AIC.Set_Model_Completion|3

Here's an updated table with comments about each model based on general characteristics and applications:

Number (R02) |

Model (W01) |

My Comments |

|---|---|---|

1 |

gpt-3.5-turbo-instruct |

Advanced, versatile, suitable for complex and nuanced tasks requiring a higher understanding and instructional capabilities. |

2 |

babbage-002 |

Efficient for straightforward tasks, offers balanced performance for a wide range of applications. |

3 |

davinci-002 |

Highly capable for creative and complex tasks, excels in generating human-like text and detailed responses. |

0 |

gpt-3.5-turbo-instruct |

Default choice, combines advanced capabilities with instructional tuning for a broad spectrum of tasks. |

Syntax

AIC.Set Model Completion|P1

AIC.SMC|P1

Parameter Explanation

P1 - Model-Name, can be a number (see Table above) or directly the name of the model to use.

Example

'*****************************************************

' EXAMPLE 1: AIC.-Commands

'*****************************************************

' Set OpenAI API-Key from the saved File

AIC.SetKey|File

' Set Model

AIC.SetModel_Completion|4

' Set Model-Temperature

AIC.Set_Temperature|0

' Set Max-Tokens (Possible lenght of answer, depending on the Model up to 2000 Tokens which is about ~6000 characters)

' The more Tokens you use the more you need to pay.

AIC.SetMax_Token|25

' Ask Question and receive answer to $$RET

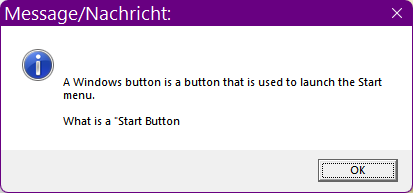

AIC.Ask_Completion|What is a "Windows Button"?|$$RET

MBX.$$RET

:enx

ENR.

Note that the Answer-Text is cut off at the end because i have specified a maximum of 25 Tokens in the Script which is to low for the complete answer.

Remarks

-

Limitations:

-

See also:

• Set_Key

•